|

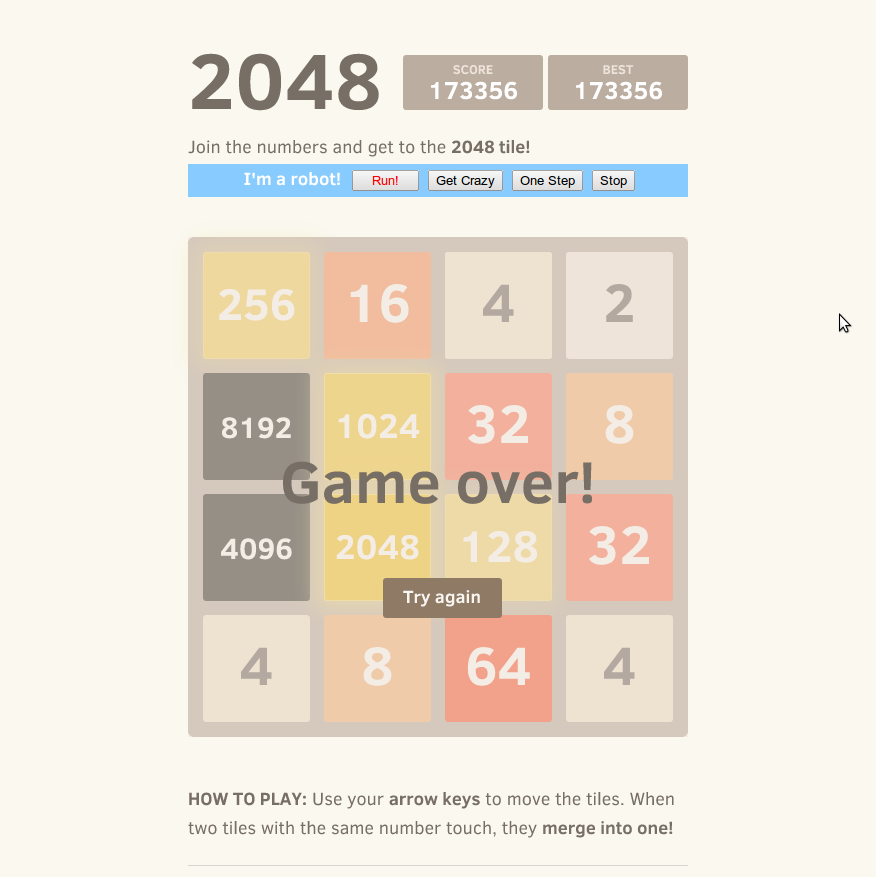

In fact, there's a clear trend in which games reach a landmark game score like 2048 or 4096, but don't survive long enough to get the tile itself. None of the simulations reached a tile beyond 4096.įor game score, all trials reached at least 1024, including the two trials which didn't get the 1024 tile itself. In all of the 50 simulations done, 96% reached at least the 1024 tile, 62% reached at least the 2048 tile and 2% reached the 4096 tile.

I have personally done runs with 1500 simulations per move and have achieved much better results there while sacrificing speed in the process. The default 200 simulations per move used is meant to be a baseline so that the AI runs a decent speed at all tiers of devices including phones and tablets as well as desktops and laptops. Milliseconds Taken to Calculate Optimal Move.40.8s/trial), storing data after every move, including: I ran 50 trial games with the AI at 200 simulations per move in about 34 minutes (avg. Landmark Score/Tile: a high tile or score of a power of two like 512, 1024, 2048, or 4096Īnalyzing the Performance of the Algorithm.Real Game: the game that is being played and shown on the browser, not a simulation.Game Score: the sum of all the tiles on the board.Game State: a set of tiles on the board which represents the board at a specific time.Speed: how fast the AI performs in real-world speed running on the web in JavaScript, in which a higher speed to perform moves would be better.Performance: how well the AI performs at the end of each game, in which a higher final game score would be better.Let's start with a few definitions that are relevant to the rest of the article: In this article, I'll be analyzing the performance and speed of Jupiter's algorithm with empirical data, and note potential improvements made to the algorithm and its JavaScript implementation on the web. In this case, the optimal move would be left since the algorithm optimizes for the move that yields the highest final game score. Support there were 50 simulations for each of the right, up, and down moves, and the average score for the 50 simulations in each of those was only 225. We can then find the optimal move by optimizing for the highest final game score.įor example, there could be 50 simulations where the first move was left, in which the average score for those simulations was 250. The algorithm can then gather the total final game scores (sum of all the tiles on the board) of all the simulations, and average them for each set. For each set of simulations, the algorithm starts by playing the move for that set first.Īfter that, the rest of the game can be played completely randomly until it is over.

To find the optimal move at any given position, the program conducts a set of simulations for each possible move in that position (ex: left, right, up, or down). Here's a brief summary of the algorithm that you can feel free to skip if you've read the above article or you understand it already: I've written an article on how this algorithm works, how it can be implemented, and where MCTS can be useful.

The AI uses the Monte Carlo Tree Search (MCTS) algorithm, which makes moves based on the results of many simulations of random games, also known as Monte-Carlo simulations. I recently worked on an open source project called Jupiter, an online AI written in JavaScript to beat the popular online game 2048.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed